Because I want to help others with their journey in preparation for the AWS security specialty here are my notes. Please do not take my notes as the only source of preparation. Its only summary and AWS is changing their services really fast so some of my notes can become really fast obsolete.

Before we will start I recommend the acloud.guru and Udemy courses to take to understand the basics. To pass the exam you need to practice a lot with hands-on labs or having working experience in the AWS security field.

Recommended learning

- https://www.udemy.com/share/101XbiBkASeFpaRnQ=/

- https://acloud.guru/learn/aws-certified-security-specialty

- https://www.whizlabs.com/aws-certified-security-specialty/practice-test/

- READ CAREFULLY : AWS_Security_Best_Practices.pdf

Policies and users

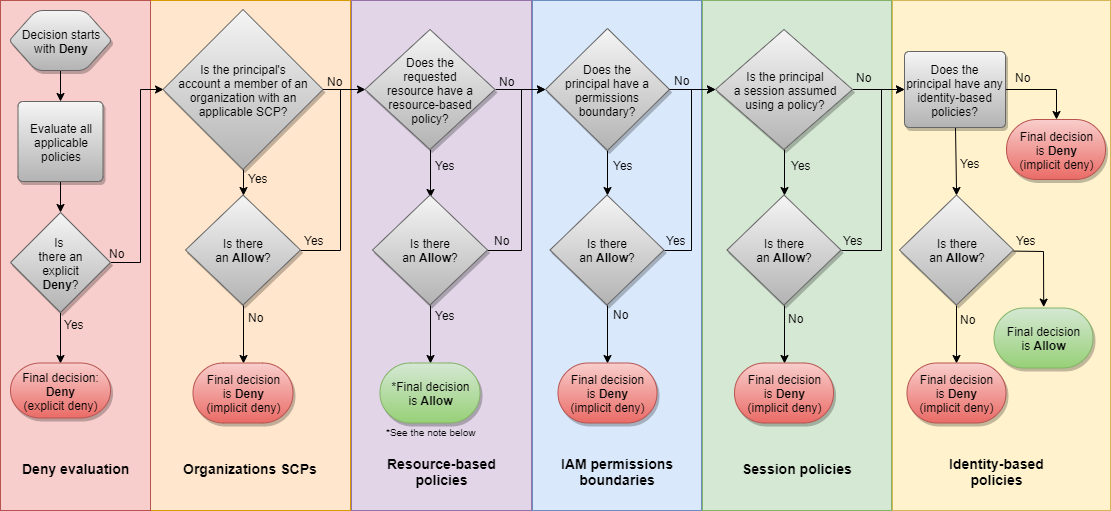

IAM is absolutely the biggest part of the exam. You need to master all the types of policies, conditions and policy evaluation logic on Policy JSON level. You need to understand the differences between different version of policy language and their effect on variables in policy language.

The core of the IAM are users, groups, policies, and roles.

- Power-user doesn’t have access to IAM.

- IAM is global and it applies to all areas in AWS.

3 types of policies:

- AWS managed policies (Manages by AWS, applicable across multiple AWS accounts)

- Customer managed policies (Applied across multiple users, groups)

- Inline policies (Applied only on one user or one group)

Limits of size for policies:

- 2kb for users

- 5kb for groups

- 10kb for roles

- S3 bucket policies allow the size up to 20 kb.

Policy generator is not generating /* after ARN S3 bucket, this part must be added manually.

{

"Id": "Policy1580505446178",

"Version": "2012-10-17",

"Statement": [

{

"Sid": "Stmt1580505427899",

"Action": [

"s3:DeleteObject"

],

"Effect": "Allow",

"Resource": "arn:aws:s3:::rambotest/*",

"Principal": {

"AWS": [

"arn:aws:iam::123456789876:user/rambo"

]

}

}

]

}

S3 bucket policy beats the IAM policy. ACL is a legacy mechanism older than IAM.

IF there is any need to define the rules for an object > use object ACL.

Specific deny beats specific allow. The difference between authenticated and non-authenticated users is part of IAM also.

For successful passing the exam, you need to know every bit of policy evaluation logic and prioritization of policy types. The following links can help you to understand the details.

Principal element – specifies the IAM user, role, a federated user accessing to the resource. They cannot be used in IAM based policies. This principal element is part of resource.based policy (S3 bucket policy)

NoPricipal – exclusion of the principals from the policy. For example, deny some actions excluded.

Links which are must know to pass the exam:

- Policy Evaluation Logic – AWS Identity and Access Management

- Using policy conditions with AWS KMS – AWS Key Management Service

- IAM Policy Elements: Variables and Tags – AWS Identity and Access Management

- Configure S3 Bucket Policy to Store Only Objects Encrypted by KMS Key

S3

S3 (Simple storage service) is object based cloud storage. It provides multiple security mechanisms which can help.

Encryption of S3 bucket:

- SSE-S3 – S3 managed key

- SSE-KMS – KMS managed key, administrator can choose specific key.

- Client side encryption – data are encrypted by customer before the data are uploaded to S3.

Enforcing SSL on S3 bucket is via Condition statement:

( Deny ) aws:Securetransport:false.

( Allow) aws:Securetransport:true.

We can deny everything that is not transmitted over secure transport.

Customer managed policy is used for Cross-region replication. Please take into account that AWS supports also Same-region replication.

Replication rules and notes:

- Versioning must be enabled on both buckets.

- The Source bucket owner must set up the permissions for replication.

- The owner of the destination bucket must grant the source bucket owner permissions to store the replicas.

- If the object is owned by someone else Object owner must grant the bucket owner the READ and READ ACP permissions.

- The best practice is to replicate the logs to a second AWS account (S3 bucket with cloud trail logs)

OWNERSHIP:

- The ownership of an object during replication can be changed.

- To replicate the object you need to have read permission for the object and ACL. (Bucker owner full access ACL for the object)

WHAT IS REPLICATED: Replicates in default with encryption SSE-S3(for SSE-KMS KMS it must be specially enabled) delete markers are replicated, new objects after CRR, Object metadata, ACL updates, Object tags, Object where the owner of the bucket has permissions

WHAT IS NOT REPLICATED: version delete, anything before CRR, SSE-C encrypted objects, Objects where bucket owner doesn’t have permission to them,

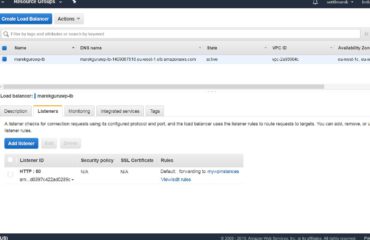

Securing S3 using cloud front :

- Important note: Cloud front and ELB / ALB need different certificates.

- To get a certificate from ACM you need to click on “Request of import certificate with ACM”

Difference between TCP and HTTP listener on ELB:

- HTTP listener can add more HTTP headers like x-forwarded-for, x-forwarded-port

- TCP is not adding any additional ports. TCP is just blindly forwarding the request.

Origin :

- Edit the origin of Cloud front distribution.

- Add origin access with identity.

- Update the policy to grant CF to access the bucket.

Presigned URL’s:

- To create presigned URL you need to > aws s3 presign s3://

- Default presign time 3600 seconds =1 hour. It can be changed by –expires-in

- Done via SDK or CLI and return presigned URL with Access key ID.

Cloud front Use signed URLs in the following cases:

• You want to use an RTMP distribution. Signed cookies aren’t supported for RTMP distributions.

• You want to restrict access to individual files, for example, an installation download for your application.

• Your users are using a client (for example, a custom HTTP client) that doesn’t support cookies.

To create the signed URL you need: key pair id and private key

Use signed cookies in the following cases:

- You want to provide access to multiple restricted files, for example, all of the files for a video in HLS

- Format or all of the files in the subscribers’ area of the website.

- You don’t want to change your current URLs.

PROBLEM : Presigned URL for S3 Bucket Expires Before Specified Expiration Time

SSL for cloud front – default cloudfront.net, I can configure my own, or use SSL from AWS certificate manager – must be in us-east-1.

- https://docs.aws.amazon.com/AmazonCloudFront/latest/DeveloperGuide/private-content-restricting-access-to-s3.html

- Restricting the Geographic Distribution of Your Content – Amazon CloudFront

- Restricting Access to Amazon S3 Content by Using an Origin Access Identity – Amazon CloudFront

- Requiring HTTPS for Communication Between CloudFront and Your Custom Origin – Amazon CloudFront

STS – Security token service

- Token validity is 1-36 hours

- STS is returning Secret access key, Access Key ID, STS Token and Duration

- Workflow : User credentials > App > Identity broker > LDAP > Identity broker > STS > Identity broker > App > AWS Resource > AWS IAM

- Identity broker: Example is AWS Cognito Identity pool

STS actions necessary to know:

- AssumeRole

- AssumeRoleWithSAML

- AssumeRoleWithWebIdentity

- DecodeAuthorizationMessage

- GetAccessKeyInfo

- GetCallerIdentity

- GetFederationToken

- GetSessionToken

List of very important readings:

- Assume-role-with-saml — AWS CLI 1.18.8 Command Reference

- Requesting Temporary Security Credentials – AWS Identity and Access Management

- How to Use an External ID When Granting Access to Your AWS Resources to a Third Party – AWS Identity and Access Management

Web Identity federation

- The user provides the WEb token from Facebook / Google and Cognito provides temporary AWS credentials

- Provides sign up / Sign in and gest user access

- Sync user data across the devices

- Recommended for Web ID in mobile devices using AWS resources.

List of very important readings:

Federation – Amazon Web Services (AWS)

Cognito user pools

User pools act more as an identity provider. On the opposite side, Cognito identity pools work more like an identity broker. The user pool can ingest identities from external sources like Facebook, Google, etc. User pools are related mainly to actions related to sign-in or sign-up activities. Cognito provides also customizable UI for WEB users. The main advantage of Cognito user pools is user directory management and user profiles.

Very important topic is customized workflow using AWS Lambda triggers. Lambda triggers, as a serverless mechanism, can help you to build for example your CAPTCHA mechanism as it’s not provided out of the box with user pools.

- Pre Sign-up Lambda Trigger

- Post Confirmation Lambda Trigger

- Pre Authentication Lambda Trigger

- Post Authentication Lambda Trigger

Cognito endpoint format: https://marektest.auth.eu-west-1.amazoncognito.com (can be found in-app integration)

https://marektest.auth.eu-west-1.amazoncognito.com/login?response_type=token&client_id=7ep42tosfjnf314ksfnt6vdiko&redirect_uri=https://www.sottl.cz (Sign up and Sign in page)

After sign in I should see the token generated from Cognito:

Note: Carefully look for access_token, id_token and expiration.

https://www.sottl.cz/#id_token=eyJraWQiOiJ0T0x4aGlWQnpUQUZvOFJSSmZUaHBRcnh4TFZkUmp1eloxMVRqMmNqWEdBPSIsImFsZyI6IlJTMjU2In0.eyJhdF9oYXNoIjoiaWtIVmJ3THA0SEcwYzd2Umw2SXBEQSIsInN1YiI6ImQwMGI2YTFjLTEyODgtNGZkZS1iMmQ1LTdhY2QyODRhNGQwOSIsImF1ZCI6IjdlcDQydG9zZmpuZjMxNGtzZm50NnZkaWtvIiwiZW1haWxfdmVyaWZpZWQiOnRydWUsImV2ZW50X2lkIjoiZWZiOTk4YTMtMDJmZS00YmNmLWIzMWUtMzliYjE4MTU5MjE1IiwidG9rZW5fdXNlIjoiaWQiLCJhdXRoX3RpbWUiOjE1ODA2NjIwNDQsImlzcyI6Imh0dHBzOlwvXC9jb2duaXRvLWlkcC5ldS13ZXN0LTEuYW1hem9uYXdzLmNvbVwvZXUtd2VzdC0xX20wS0FvcXZmWSIsImNvZ25pdG86dXNlcm5hbWUiOiJtYXJlayIsImV4cCI6MTU4MDY2NTY0NCwiaWF0IjoxNTgwNjYyMDQ0LCJlbWFpbCI6InNvdHRsbWFyZWtAc2V6bmFtLmN6In0.B2D4oYG01EtLu7vJXjItxbuV7eWaq03Wa7FrsCu5M1rFX5phZsjbn_OGLxt9pyBhQr-4xK3B8TxLkyXhGj0G7nG0JaTDWmuoaUVwxK1FHZcFegwIpgSd2Ekjpv0_X7gvBv_H5LwE0LHcOI1StmjGZbO_Kb9wwy2PvJNms7otIBVcdekb2J6_xoBwjB49L-2EWncTZHnucsX7R-K-dXT9f649cuaYhbkGVlU7yN8TWQxVvLjPzMi3yVrX0nQVCJA4iQCGmbX7PJoty6FqWtfVCTJgpgyPdpXPYS6KzhKkA0GQWYtwk6P3t6unKmnLF1hM4aWlvXXRs03WV3x_qVnhsQ&access_token=eyJraWQiOiJwN2JjMmwrWFBkc0JsOTB6M1lwa0dMVmp4d0hvSXVuSTFBY0YzSG9kazZFPSIsImFsZyI6IlJTMjU2In0.eyJzdWIiOiJkMDBiNmExYy0xMjg4LTRmZGUtYjJkNS03YWNkMjg0YTRkMDkiLCJldmVudF9pZCI6ImVmYjk5OGEzLTAyZmUtNGJjZi1iMzFlLTM5YmIxODE1OTIxNSIsInRva2VuX3VzZSI6ImFjY2VzcyIsInNjb3BlIjoiYXdzLmNvZ25pdG8uc2lnbmluLnVzZXIuYWRtaW4gcGhvbmUgb3BlbmlkIHByb2ZpbGUgZW1haWwiLCJhdXRoX3RpbWUiOjE1ODA2NjIwNDQsImlzcyI6Imh0dHBzOlwvXC9jb2duaXRvLWlkcC5ldS13ZXN0LTEuYW1hem9uYXdzLmNvbVwvZXUtd2VzdC0xX20wS0FvcXZmWSIsImV4cCI6MTU4MDY2NTY0NCwiaWF0IjoxNTgwNjYyMDQ0LCJ2ZXJzaW9uIjoyLCJqdGkiOiJlOWQ3NWRmNC1kZDA0LTQyYTktOGEwZS02ODQxMTM2MzhjZGUiLCJjbGllbnRfaWQiOiI3ZXA0MnRvc2ZqbmYzMTRrc2ZudDZ2ZGlrbyIsInVzZXJuYW1lIjoibWFyZWsifQ.Xvvzjwjs5VoIEuajjYPIRj6K7RqF3MuMl2-EQNfztZTMUoL07s90eobZbyDL7J-2XUR13_2hUv_j1jtlTw2INP2xoGHRwahoOXFG13fFAqiWZjiSSmxqLzIuLH6f_Ghbv2dTtgyFEs72DXavXi7aJiJTvOjgE1lKtfx1UmSTZ-sr7WW5gk3iMW4tHWwMwS5qzpNt15vXXDCbaKtCXWHzfNVEidqTAYcqZPqxsre1uru3_wy_os3gRxGF6Do6onma8vGNJNqOmElqqkFXHC-OpRfOFrBWIzjmrJ70r2go2l9FGlyZz-tQUFdwRJ8awoNuS4Z08Zmjo_Udm3oK_9Wj2Q&expires_in=3600&token_type=Bearer

I can create a group and assign it to a specific IAM role. The groups will be different users from Cognito user pool.

Links necessary to read:

- Integrate a REST API with an Amazon Cognito User Pool – Amazon API Gateway

- Adding Social Identity Providers to a User Pool – Amazon Cognito

- Customizing User Pool Workflows with Lambda Triggers – Amazon Cognito

- Using Identity Pools (Federated Identities) – Amazon Cognito

- Configuring a User Pool App Client – Amazon Cognito

Glacier Vault lock

Compliance control over Glacier archives with lock policy. Vault lock is bringing WORM approach to glacier archives (for example archived logs which must be stored for regulatory reasons.)

Glacier – low-cost storage for archives (zip, tar)

Archive – one or more zip or tar files

Vault – one or more archives in container

READ : Amazon S3 Glacier Vault Lock – Amazon S3 Glacier

Glacier vault lock is not the only lock type available. There is also an AWs object lock: Locking Objects Using Amazon S3 Object Lock – Amazon Simple Storage Service

S3 object lock

2 options are available. You can choose from one based on the retention period and second enabled legal hold. The object is under WORM (Write once read many) approaches.

Configuration steps:

- Initiate the lock, and attach the policy to the vault. (In-progress state),

- 24 hour validation period, Then applied and cannot be changed. In this period policy can be aborted.

Main features: Using Vault lock policy, retention policy, implementation of WORM (Write once read many), Cen be used for regulatory and compliance controls.

Behavior: After the policy is stetted up, You have 24 hours for validation, When is validated policy is becoming immutable.

AWS Organizations

AWS Cloud trail

Cloud trail is logging every API call in AWS. All the AWS services are designed to udse API, so cloud trail can bring several auditing advantages and can be easily connected with Cloud Watch. Good use case are root user CLI actions. Logs only API calls, not direct SSH calls. When the CLI is used its working with AWS API.

You can analyse Cloud trail logs with combination of S3 and Athena service. Athena is providing Database like log analysis.

It logs metadata around API calls:

- Identity of the API caller

- Time of API call

- The Source IP address

- Request parameters

- Response elements returned by the service

Notes for Cloud trail in nutshell:

- The visibility of the cloud trail logs in event history is 90 days.

- IF you want to log S3 bucket event and Lambda data events you must explicitly enable it.

- Send in an S3 bucket (Lifecycle management policy is good to set up)

delivered every 5 minutes (can be 15 minutes delay) - Aggregation is supported on the region level

- Aggregation is supported on account level

- Events are logged only in the timeframe that you enable Cloud trail logs.

Cloud trail insights – Insights events are records that capture an unusual call volume of write management APIs in your AWS account. Additional charges apply.

Cloud trail digest files – is the logs are valid and not changed. Basically they provide integrity check, its hash of the logfile.

For integrity, validation is used SHA256 and SHA256 with RSA for digital signing.

- What Is AWS CloudTrail? – AWS CloudTrail

- Creating a Trail For Your Organization in the Console – AWS CloudTrail

- Using AWS Lambda with AWS CloudTrail – AWS Lambda

AWS Config

A big part of the exam, You need to know how to set up different alarms – a combination of cloud watch, cloud trail, and custom metrics. AWS Config is a service for monitoring and tracking (via timeline) the configuration changes.

AWS Cloud Watch notes

AWS Cloud watch – monitoring service for AWS resources and applications in AWS environment.

VPC flow logs – monitors the network traffic of the ENI within a VPC. VPC flow logs are basic for every security engineer and network engineer also.

ALERT IMPORTANT: https://docs.aws.amazon.com/vpc/latest/userguide/flow-logs.html

Which services are regional and which not is important to know for the exam.

Important parts of Cloud Watch service:

- Cloud watch metrics + Metric filter

- Dashboard

- Cloud watch logs

- Cloud watch events

IF you want to monitor S3 operations you can use AWS S3 Events to ingest the event information about S3 objects. There can be SNS notifications for your critical buckets.

Interesting study: https://docs.aws.amazon.com/AmazonS3/latest/dev/NotificationHowTo.html

Very cool stuff with Grafana: https://grafana.com/docs/grafana/latest/features/datasources/cloudwatch/#using-aws-cloudwatch-in-grafana

Setting up Alarm for SNS topic: https://docs.aws.amazon.com/AmazonCloudWatch/latest/monitoring/US_SetupSNS.html

Inspector

AWS Inspector is similar to common vulnerability scanners. It provides some specific scans for AWS and can give you a very good insight into your target deployment security maturity. Before you go to the exam run several assessments and read and study the reports to know the content. Don’t be scared with 600 pages of the report, they can be exhaustive.

Steps to run a scan of your AWS resources:

- Create an Assessment target.

- Install agents on EC2 instances

- Create an Assessment template.

- Perform Assessment run.

- Review Findings against “Rules”.

You need to install the agent via wget or curl Rules Packages in Amazon Inspector

Network assessments:

- Network Reachability

Host assessments:

- Common Vulnerabilities and Exposures – The rules in this package help verify whether the EC2 instances in your assessment targets are exposed to common vulnerabilities and exposures (CVEs). Attacks can exploit unpatched vulnerabilities to compromise the confidentiality, integrity, or availability of your service or data. The CVE system provides a reference method for publicly known information security vulnerabilities and exposures. For more information, see https://cve.mitre.org/

- Center for Internet Security (CIS) Benchmarks – Amazon Inspector currently provides the following CIS Certified rules packages to help establish secure configuration postures

- Security Best Practices for Amazon Inspector – Use Amazon Inspector rules to help determine whether your systems are configured securely.

High – Describes a security issue that can result in a compromise of the information confidentiality, integrity, and availability within your assessment target. We recommend that you treat this security issue as an emergency and implement immediate remediation.

Medium – Describes a security issue that can result in a compromise of the information confidentiality, integrity, and availability within your assessment target. We recommend that you fix this issue at the next possible opportunity, for example, during your next service update.

Low – Describes a security issue that can result in a compromise of the information confidentiality, integrity, and availability within your assessment target. We recommend that you fix this issue as part of one of your future service updates.

Informational – Describes a particular security configuration detail of your assessment target. Based on your business and organization goals, you can either simply make note of this information or use it to improve the security of your assessment target.

Trusted advisor

Trusted advisor checks available:

- Cost Optimization

- Performance

- Security

- Fault Tolerance

- Service Limits

There are two different business models Basic and Bussines or Enterprise plan customer.

LINK: Trusted Advisor | Environment Optimization | AWS Support

KMS – Key management service

AWS Video good to watch :

Keys generated in KMS are region-based. IAM is global – user groups etc. are global. The keys are not.

If the object in the S3 bucket is encrypted by AWS KMS, it cannot be accessed even though the object is made public. With usage, of KMS is an added layer of security.

Security administrator – can obtain the ownership of the key, can read the encrypted content.

System administrator – less privileged access recommended for the admins. Cannot change the ownership of the KMS keys.

External access to key policy :

you need to setup the external account access and the external IAM role must have allowed action.

- IAM policy – must be assigned to user, group or role. Defining the allowed actions kms:Decrypt, kms:Encrypt etc.

- Key policy – THe policy is assigned to a specific key, denies the administrator of the key, defines user of the key, defines trusted accounts.

Schedule delete of key – 7-30 days

Key policy example:

{

"Id": "key-consolepolicy-3",

"Version": "2012-10-17",

"Statement": [

{

"Sid": "Enable IAM User Permissions",

"Effect": "Allow",

"Principal": {

"AWS": "arn:aws:iam::123456789876:root"

},

"Action": "kms:", "Resource": ""

},

{

"Sid": "Allow access for Key Administrators",

"Effect": "Allow",

"Principal": {

"AWS": "arn:aws:iam::123456789876:user/Bruce.Banner"

},

"Action": [

"kms:Create", "kms:Describe",

"kms:Enable", "kms:List",

"kms:Put", "kms:Update",

"kms:Revoke", "kms:Disable",

"kms:Get", "kms:Delete",

"kms:ImportKeyMaterial",

"kms:TagResource",

"kms:UntagResource",

"kms:ScheduleKeyDeletion",

"kms:CancelKeyDeletion"

],

},

{

"Sid": "Allow use of the key",

"Effect": "Allow",

"Principal": {

"AWS": "arn:aws:iam:: 123456789875:user/Bruce.Banner"

},

"Action": [

"kms:Encrypt",

"kms:Decrypt",

"kms:ReEncrypt", "kms:GenerateDataKey",

"kms:DescribeKey"

],

"Resource": "*"

},

{

"Sid": "Allow attachment of persistent resources",

"Effect": "Allow",

"Principal": {

"AWS": "arn:aws:iam::123456789876:user/Bruce.Banner"

},

"Action": [

"kms:CreateGrant",

"kms:ListGrants",

"kms:RevokeGrant"

],

"Resource": "*",

"Condition": {

"Bool": {

"kms:GrantIsForAWSResource": "true"

}

}

}

]

}

IF you want to import your own key you must download wrapping key and import token.

Wrapping key algorithms supported:

RSAEC_OAEP_SHA_256

RSAEC_OAEP_SHA_1

RSAEC_PKCS2_V1_5

Important points:

- The validity of ImportParameters (Wrapping key and import token) is 24 hours. You cannot use twice the import token and wrapping key. Every import must have different wrapping key and import token, even if you are importing the same key material.

- We cannot enable automatic key rotation when using CMK (Customer master key). We can manually rotate CMK.

- If WE import the key material we can delete the key, if we are using AWS managed key material, we cant!

- KMS sits on multitenant hardware.

- When you encrypt the plaintext the CLI will return key id and ciphertext blob (encoded with base64)

- When you generate data key you get cipher and plaintext version of them + key ID.

- The data key is god to use for files bigger than 4 Kb. Less than that can be managed by KMS.

DATA key caching

Data key caching allows you to cache data keys used for encryption os multiple data elements with same key. On another side, this approach can introduce additional risk to data exposure.

- Cache the data key (cipher and plain text version of it) to reduce and overcome the API KMS limits.

- Useful when there is delay or latency.

openssl rsautl -encrypt -in PlaintextKeyMaterial.bin -oaep -inkey wrappingKey_b1a19fee-7b98-4561-9525-d1e8f7c644d6_02161412 -keyform DER -pubin -out EncryptedKeyMaterial.bin

CMK Parameters :

- Alias – Important when appointing alias to new key with new key material. Applications are appointing only key alias and not the key content itself.

- Creation date

- Description

- Key State = enabled / disabled

- Key material – AWS provided and Customer provided (Extnernal)

Cannot be exported !!!

The ciphertext is not portable between CMK’s

You cannot import or export from KMS.

Key rotation options

AWS managed

- by default rotates the key every 3 years. Creates the backing key. The old key is available for decrypting your data.

Customer managed

- One a year automatically (disabled by default). The old backing key is saved.

- On-demand manually, You create a new CMK and update the usage. Keys can be deleted, we control the rotation frequency.

Customer managed with imported key

- On-demand manually, You create new CMK and update the usage manually, You create new CMK and update the usage. Keys can be deleted, we control the rotation frequency. You are responsible for keeping the old keys.

Links:

- https://docs.aws.amazon.com/kms/latest/developerguide/programming-aliases.html

- Configure S3 Bucket Policy to Store Only Objects Encrypted by KMS Key

- Rotating customer master keys – AWS Key Management Service

- FAQs | AWS Key Management Service (KMS) | Amazon Web Services (AWS)

- Using policy conditions with AWS KMS – AWS Key Management Service

Notes:

KMS key pair cannot be used to SSH into EC2, but it can be used to encrypt S3, RDS, Redshift etc.

To use key pair for SSH you need to import that in the KeyPair section in EC2.

KMS & EBS

- Root volume can be encrypted in EC2 launch process.

- You can create a snapshot of current EC2 which is encrypted, you cannot encrypt the volume by modifying that.

How to encrypt additionally the volume :

1) Detach

2) Create snapshot

3) Create AMI (can be private or public, share with another AWS account)

4) Copy the AMI – with encryption

5) Choose the master key

Important: Keys are region-specific.

EC2 and Key pairs relationship

The public key in EC2 is in hidden dir .ssh under the name authorised_keys. To access it you need to elevate the privileges with sudo su.

The public key can be taken from metadata 169.254.169.254/latest/meta-data/public-keys/0/openssh-key

Adding new public key:

You can pipe new keys into authorised_keys. You will be able to connect to EC2 with new private key. New key pair can be uploaded to S3 if you give S3FullAccess role to EC2.

Question: What happened if you delete keypair?

- When you delete the key in the console, it will not affect the EC2 stored key. The EC2 public key metadata are always the same

- If you will create new AMI clone. IT keeps all authorized keys and its added new PK that you generate for the instance.

- So if you lost the keys, you can clone the instance and add a new keypair. Old keys can be deleted.

You cannot use SSH with KMS. (You cannot export the keys)

You can use SSH with HSM. (You can export the keys)

KMS WHITE PAPERS:

- https://d0.awsstatic.com/whitepapers/KMS-Cryptographic-Details.pdf

- https://d0.awsstatic.com/whitepapers/aws-kms-best-practices.pdf

HSM (Hardware security module)

HSM has 2 partitions – 1 for AWS monitoring and second for storing the customer keys. HSM is supporting assymentrics keys and this was a long time its advantage. Currently, AWS KMS supports also asymmetric keys.

FIPS 140-2 – used for approved cryptographic modules. Level 1-4, HSM – level 3.

Thank you for reading!